IPA Safely Returning to In-Person Research—A 2021 Update

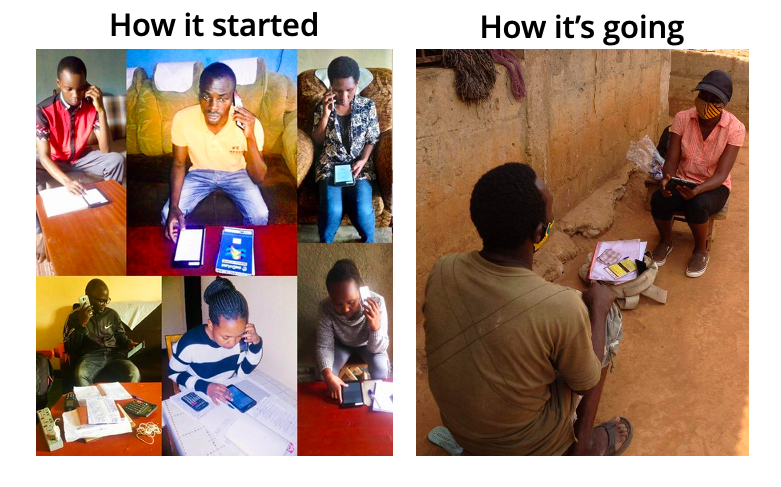

Back in August, we shared IPA’s new policies and procedures for safely re-starting in-person data collection. Now, seven months later, we want to report on how it’s going.

Back then it felt like we were flying a plane through a storm with no visibility and limited data from our cockpit instruments. Now, with vaccines coming, we foresee skies are clearing ahead. But with slow vaccine rollout in areas where IPA works and evolving strains of the virus, we are still very much in the storm. We do have more data now and a lot more experience under our belt, so it’s time to share what we’ve learned and how we expect to navigate the transition to a post-pandemic world.

We have returned, in a limited way

As of mid-March 2021, IPA had approved 63 applications for in-person activities in 17 countries, with the large majority of these activities (83%) being in Africa. Of those outside Africa, the 2 projects approved in Latin America are both small-scale activities in Mexico. The 8 in Asia include 3 in Myanmar and 5 in Bangladesh. All have been modified to adapt to local guidelines and IPA-formulated COVID-19 precautions, whichever are more stringent.

Using data to weigh risks

When we rolled out the in-person data collection approval process late last summer it was important to use the best available data to assess risk and support decision-making. These data should come from on-the-ground sources, reports from hospitals and health facilities, local, regional, and international health agencies. In countries where we had concerns about testing or official reporting, we monitored other indicators, like all hospitalizations and excess deaths.

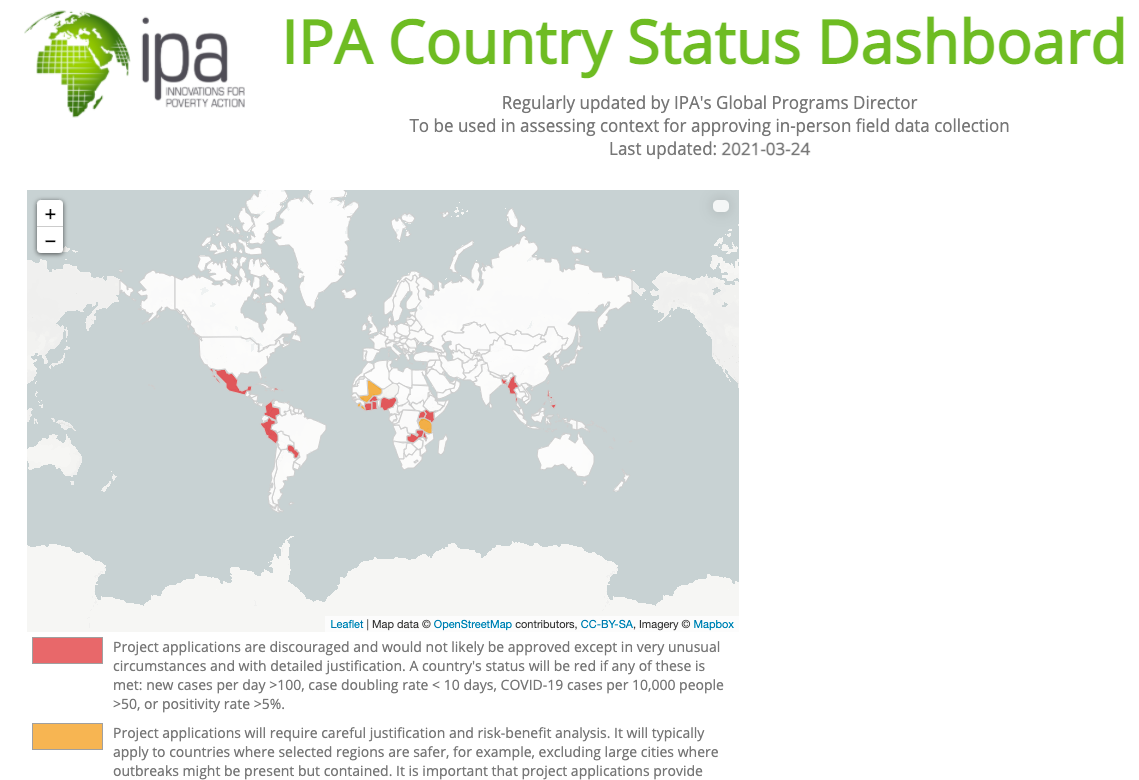

One place where we keep track of and consolidate those data is the IPA Country Status Dashboard. The IPA Country Status Dashboard, which we recently updated to include a map visualization and links to subnational data sources, is one important source of information in the in-person data collection request process.

The country status dashboard is a tool that can be consulted by anyone interested in working with IPA on face-to-face data collection. The site was set up to assess COVID risk, but we have been using it to provide updates on risks from any major events, such as the August coup in Mali, the January elections in Uganda, and the February coup in Myanmar. We may continue using the dashboard even after the threat of COVID-19 abates, to share with our partners and donors the ongoing and evolving risks we face in conducting face-to-face research.

Anyone following our risk assessments over the past seven months will notice that we have yet to confer green status on any country. Mostly countries in East and West Africa have spent periods in yellow, while most of our 22 countries remain red. That does not mean we don’t work in code-red countries, only that we have kept the burden of proving a study is safe high for project teams.

Returning to the field, carrying out our mission

All this constant monitoring, the careful, extensive deliberation over project approvals, and the rigorous project launch reviews have allowed us to make steady but responsible progress on our mission to bring the best evidence to problems of global poverty. One example is the Bangladesh Mask Project, a large-scale randomized evaluation, in which we’re working with government partners to evaluate strategies to increase mask-wearing, and measuring the effectiveness of masks in reducing transmission in a real-world setting (as opposed to a lab). Because the public health benefits of the research demanded timely evidence, we made the decision to go back into the field (luckily and thanks to our safety protocols, none of our enumerators have become infected as of March). Another example is IPA’s collaboration with the Ministry of Education in Liberia to develop and test a learning assessment policy and framework in alignment with the Reformed National Curriculum. This example illustrates a case where community transmission is low, making it possible to return attention to pressing problems in the country.

What we have learned

For the most part, face-to-face data collection has been able to progress in accordance with the COVID-19 protocols for in-person activities that IPA developed and implemented in mid-2020. These protocols have evolved and strengthened over time and have been adapted to the specific conditions of each country and study. IPA’s teams in the countries have collected timely data on compliance with the COVID-19 protocols, assessed their efficacy and ease of implementation, and introduced new protocols to provide increased safety to the field teams and respondents. For example, teams have created detailed health-check guidance for field staff and compiled self-reporting dashboards that helped to track common COVID-19 symptoms and ensure that enumerators are required to self-isolate if symptomatic.

As the result of IPA’s COVID-19 protocol, many studies have incurred higher-than-normal costs, but donors have been patient and understanding, recognizing that the cost of doing this work safely is higher than normal; these costs come, among others, from purchasing personal protective equipment (PPE) for staff and respondents, traveling in smaller groups with more private transportation, and offering income protection to incentivize honest reporting of COVID symptoms or contacts.

The most important lessons we have learned are about what to do when an infection is discovered.

Lesson #1: Follow the plan.

This past month in northern Ghana we discovered our first case of COVID-19 among any field research staff or participants. In this case, two field managers who tested positive were isolated. In accordance with our protocols, we immediately halted field activities, conducted contact tracing and required their immediate contacts to self-isolate, and organized rapid tests for the close contacts. All close contacts of the field managers tested negative, however, the study remained halted for two weeks just to be certain while we continued to provide income for the members of the field team. Field managers don’t have close contact with respondents, so they were fortunately not placed at risk in this case.

Lesson #2: Splitting up teams has extra costs but possible benefits.

As we have all learned, it is difficult to change the normal way of doing things. Our study team in Ghana that had a member test positive was quite large; over 75 people. Because it would have been very difficult to break the team into smaller groups for every single activity (travel, debriefing, eating meals, quality controls) it became necessary to shut down the entire field operation for two weeks rather than just a portion of the team when an infection was discovered. Going forward, researchers have to weigh the inefficiencies of breaking field teams into smaller-than-optimal “pods” against the flexibility that comes from being able to take offline a single pod while the rest of the team continues their work (in the event of an infection or contact with an infected person).

Lesson #3: Act as if anyone could be infected. Don’t relax restrictions.

This experience was a reminder of the importance of assuming that everyone—enumerators, field managers, study participants, and their household members—could be infected with the novel coronavirus even if they are asymptomatic, so research operations should be conducted accordingly. The measures we have taken are very basic—diligent observance of 2-meter social distance, outdoor interviews, masks, and handwashing—but the key is to take them seriously, even in situations where we face social pressure or competition from other survey research firms, to relax these measures. We work in some environments where local authorities do not require or even discourage masking, or they promote only 1.5 or 1-meter separation for social distancing. It is the strict compliance with these measures that allowed us to break the chain of contagion in northern Ghana. It requires resolve to insist on a consistent set of standards, but with training and communication, we can do it.

What’s next

While we can’t let our guard down, we are optimistic about the future. Even though vaccines may be a long way off for the countries where we work, our field teams are getting better and better at navigating modified transportation, lodging, training, interviewing, and data quality monitoring. We know how to design studies around remote data collection, and budget COVID-safe in-person data collection if it’s also needed. We are more diligent about gathering phone information, more prepared for adverse events like a COVID infection that require contact tracing and isolation.

At this point, we don’t know if or when mass vaccination will restore the world back to 2019 conditions, but we do know that even if it did, we have a much deeper toolbox for collecting data, especially given the progress we have made in advancing phone survey methods. We have become far more adept with remote data collection

- Questionnaire design—intro and consent text, item formats, survey length

- Use of audio recordings to audit data quality

- Remote monitoring and supervision of interviewers

- Better recruitment, training, and support of phone interviewers, recognizing the differences in the job requirements for in-person and phone enumerators

- A more nuanced understanding of how to maximize response rates, respondent cooperation, data quality, and representativeness of data

- Routine incorporation of meta-data collection to contribute to methods R&D

- A better understanding of opportunities and limitations of working through proxies, for example, adult caregivers to mediate a child assessment on phone or video, or knowing when an individual can report accurately on household-level variables like income, consumption, distribution of resources, or bargaining power

In the future we may decide to do more multi-mode research—for example, in-person baseline with phone follow-up for missed respondents, phone follow-up for midline or endline surveys with in-person follow-up for missed respondents, so that even when the threat of COVID-19 abates, we will have the tools to optimally run multi-mode surveys, using remote data collection to reduce the cost and increase the frequency and speed of future waves of data collection.