Do You Know Your Future Self?

People often struggle to follow through on things they want to accomplish. When we try to do something like quit smoking, save money, or exercise, many of us have short-term desires that conflict with our long-term goals. To address this, economists have designed and evaluated a wide range of commitment devices. Commitment devices are strategies that allow people to voluntarily “lock themselves in” to a course of action in the future that they might otherwise have trouble following through on. But when designing studies around human failures and upping the stakes, researchers also run a risk.

When we look at the average effects of commitment devices, there is a body of evidence suggesting that they can be effective. But commitment devices can be a double-edged sword. While they encourage people to act according to their goals, those goals can still be hard to meet—and if you do not, the penalties can be severe. Say, I am not a runner but I want to get in shape for the New York City half marathon in a few months. I make a bet with a friend (or I use a commitment website)—I can bet a certain amount of money that I will forfeit if I do not run it. Not having ever run regularly, I do not know how hard it will be to get into shape, but if I bet enough money I will have to go through with it right? So, $500? $1000?

I find out that running regularly is really hard. The idea of the race sounded great in theory, but at the moment when I have to leave my warm bed to go running at 6 AM before work, it is suddenly less convincing. I learn that even the thought of losing $500 is unable to get me out of said warm bed regularly enough to prepare. I am now locked into the bet with my friend (or the website). What now?

What is vital with commitment devices is self-knowledge. To set targets for ourselves that we can meet, we need to have a sense of how we will respond to incentives in the future. And if there is one thing social science has shown us, people are not great at knowing themselves. Especially their future selves.

A study by Anett John, who worked with IPA Philippines, provides an example of what can happen when participants are not able to predict what their future selves will do very well. In the study, the research team partnered with a bank to offer commitment savings accounts to low- and middle-income clients who wanted to save for a major upcoming expense (like annual school fees). The accounts put clients in total control—they set the amount they wanted to save, the date by which to do it, committed to depositing a certain amount each week to meet it, and set a penalty if they did not stick to it. If they fell more than two deposits behind, the account was closed, and they paid the penalty while getting the rest of their savings back.

A high proportion of the people offered the accounts opened one, and on average those who were offered an account saved a lot more than those who were not. However, overall many people did not benefit—indeed, over half of the people who opened an account (55%) defaulted on the savings contract they had set themselves, forfeiting their chosen amount, typically one to two days’ wages.

The research team collected measures about the individuals like risk aversion and financial literacy, but nothing predicted defaulting. What seems to have happened was that clients who defaulted had overestimated their own self-control and/or underestimated the difficulty they would encounter in saving.

To be clear, enough people were successful at saving a lot, that on average comparing the two groups’ total savings—the commitment groups saved more, so it looks like a successful program. But at the individual level, 55% of adopters forfeiting one to two days' wages sounds less of a success for behaviorally-informed savings products.

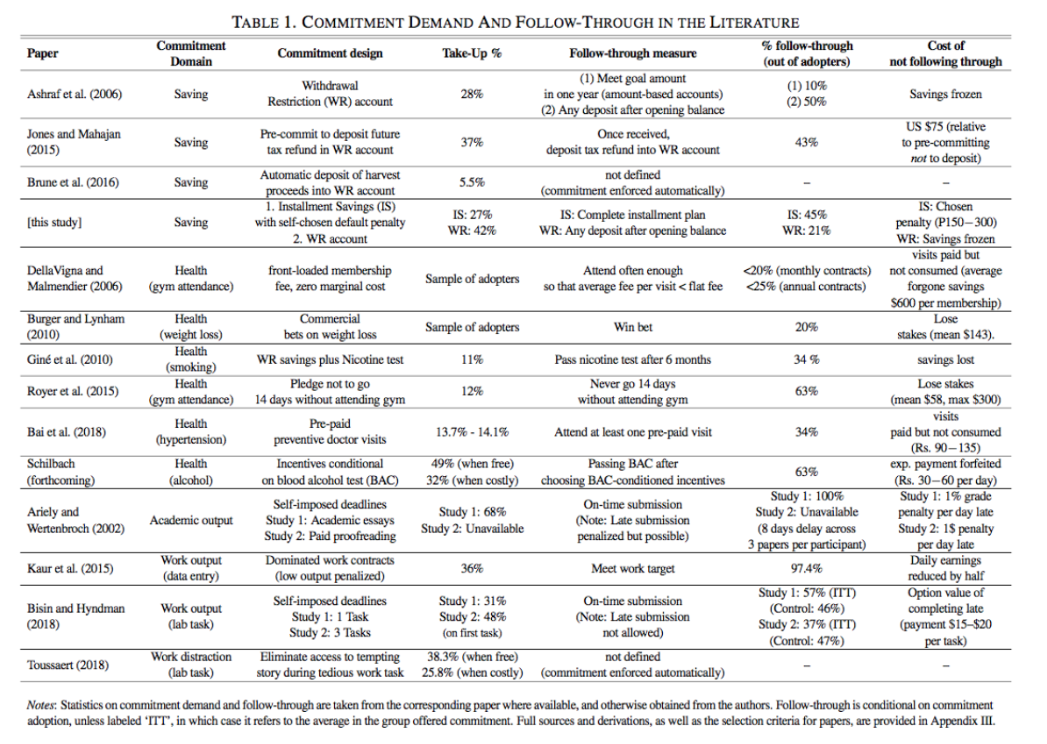

A closer look at the body of evidence suggests that participants in many other commitment device studies have failed to follow through at similar levels. In the paper for this study, Dr. John conducted a systematic review of the rates at which participants “followed through.” A table listing the studies and their follow-through rates is included below.

The review found a common pattern: like in this study, often more than half of the adopters failed to follow through on their commitments. In many studies, participants incurred penalties, had their savings frozen, or paid other costs of forgone commitment. While the reasons are not fully clear (the studies did not all collect individual-level measures the way the Philippines study did), the review suggests that this result is the norm, not the exception.

The fact that people are not great at knowing their future selves poses a challenge for commitment devices. There are a few possible responses, but each has its problems:

Mandate higher penalties. In the Philippines study, participants tended to set penalties that were too low to provide enough of an incentive to follow through on their commitment. Designers of commitment products can mandate that penalties reach a certain level—however, this requires a high level of fine-grained knowledge about the preferences and behaviors of the specific product’s users.

But...we have already seen that a certain number of people are going to default, and it also means tying up liquidity in case of emergencies—are we willing to up the stakes?

Provide education and assessment. Designers of commitment products can also educate prospective users about the risks and perform tests to assess the users’ likelihood to achieve their goals. If people choose commitments that they are unlikely to achieve based on past track records and preference measures, designers can decline to offer them the product.

But...this entails a substantial amount of paternalism and confidence in our ability to know people better than they know themselves, and the track record of changing people’s behavior through financial education is pretty lousy.

Reset our sights. Maybe commitments are more effective at maintaining a desirable status quo than pushing people to new heights. In the systematic review of commitment devices above, the outlier was a study by Supreet Kaur, Michael Kremer, and Sendhil Mullainathan where workers in India chose an output level to reach daily and were penalized if they did not reach it (receiving only half the wage per output unit). In this case, follow-through was far higher than in any other study—workers met their targets 97% of the time. It appears that workers were using the contracts to stick to their minimum benchmarks and discipline themselves from going home early, rather than to achieve more.

The Philippines study and indeed most commitment contract studies show that we should be careful about measuring impacts based only on group averages. But there is also a possibly more important lesson—if people are not good at knowing their future selves, researchers do not have better predictive or targeting tools, and the studies seem to be hurting more people than they help, we may want to rethink the costs and benefits of these programs, or at least put in place mechanisms to mitigate any harms inadvertently done.